Microsoft AI can clone voice from only three seconds of audio

VALL-E is an advanced program that can generate synthesized voice with minimal data of three-second audio

Microsoft's new text-to-speech AI will clone voices, including tone and pitch, using only a three-second snippet of audio.

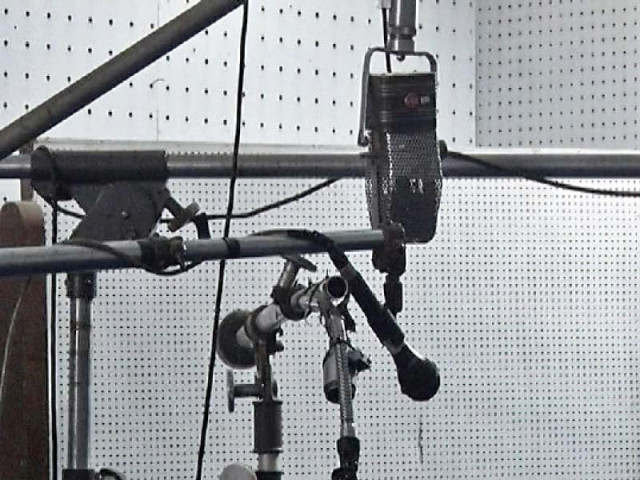

VALL-E has a "neural codec language model" that is a complex system but is quite easy to use with just a plug-in of audio and text.

The program's creators are optimistic that it can be used for high-quality text-to-speech applications like speech editing and audio content creation. Microsoft's program is built off of EnCodec which was announced by Meta last year in October.

VALL-E generates discrete audio codec codes from text and acoustic prompts, analyzing how a person sounds and breaking that information into discrete components. EnCodec uses training data to match what it knows about how that voice would sound if it spoke another phrase.

VALL-E's speech-synthesis capabilities have been trained from an audio library assembled by Meta, and containing 60,000 hours of English language speakers from more than 7,000 speakers. For a good result, the three-second voice clip sample has to closely match the training data provided.

The sample provided by Microsoft demonstrates that the program can generate variations in voice tone by changing the random seed used in the generation process. VALL-E can imitate the acoustic environment of the audio that the sample audio contained, like imitating how a voice would sound on the phone.

Many news sites use machine-powered dictation services, but speech-generating programs require a large amount of input. Most importantly, the voice doesn't sound human-like and is unable to convey emotions and inflections. VALL-E is quite advanced and provides a better and more accurate result with little required input. The program, however, carries potential risks in misuse of the model, such as spoofing voice identification or impersonating a specific speaker.

COMMENTS

Comments are moderated and generally will be posted if they are on-topic and not abusive.

For more information, please see our Comments FAQ