This text generator model can create fake news

It claims to perform better than human and is able to generate realistic stories, poems and articles

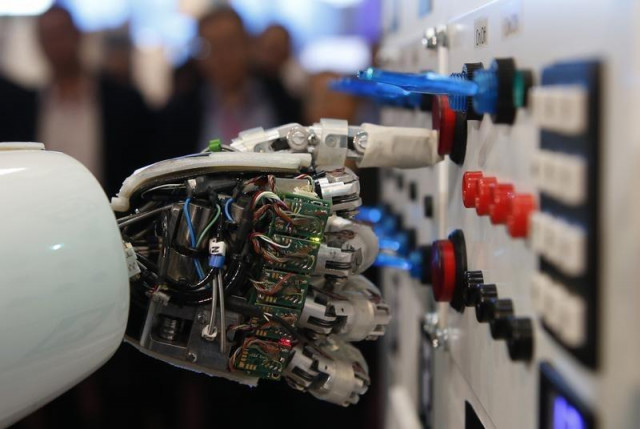

The research centre, which has two laboratories operating under it - a Smart City Lab and a Neuro Computation Lab, has several completed and on-going projects under its belt. PHOTO: REUTERS

The artificial intelligence system which some claim performs better than humans is able to generate realistic stories, poems and articles.

However, an updated version of the system has been released that can be used to create fake news or abusive spam on social media.

GPT-2 is trained on a data-set of eight million web pages, and is programmed to adjust to the style and content to the text that is provided to it.

AI Summit help boost Pakistani tech industry

"Due to our concerns about malicious applications of the technology, we are not releasing the trained model. As an experiment in responsible disclosure, we are instead releasing a much smaller model for researchers to experiment with," reported the firm.

The firm decided to release a version of the program that consists of lesser parameters in terms of phrases and sentences - than used during training.

Ma vs Musk: tech tycoons spar on future of AI

"I'm terrified of GPT-2 because it represents the kind of technology that evil humans are going to use to manipulate the population - and in my opinion that makes it more dangerous than any gun," according to BBC Article author Tristan Greene.

This month, OpenAI decided to grow the parameters, by offering a much broader database of training data.

This article was originally published on BBC.

COMMENTS

Comments are moderated and generally will be posted if they are on-topic and not abusive.

For more information, please see our Comments FAQ